AI Training Video Analytics: How to Prove Learners Actually Understood the Video

AI creates training videos fast—but speed doesn't prove learning. Learn how interactive video, xAPI, SCORM, branching, and predictive analytics turn AI video into measurable training.

AI video solves production speed. Interactive video analytics solves proof: what learners watched, clicked, chose, answered, replayed, skipped, and completed.

TL;DR

AI video production makes training content faster to create, update, and localize. But faster video does not prove learning. Passive video analytics usually stop at views, watch time, and completion. Interactive video analytics show what learners did inside the video: quiz attempts, branch choices, replays, pauses, hotspot clicks, resource opens, and xAPI events. SCORM is useful for LMS summary reporting. xAPI gives teams a richer event trail when the experience is configured to send those events. The next advantage in AI training video is not speed alone. It is measurable learning.

- AI video makes training content faster to produce, but speed does not prove comprehension.

- Interactive video creates measurable learner actions: answers, branches, replays, clicks, and completions.

- SCORM is most commonly used for LMS summary reporting. xAPI can capture granular event data when the experience is instrumented correctly.

Key Takeaways

- AI training video analytics is the measurement of how learners interact with AI-generated or AI-assisted training videos — through completions, quiz attempts, branch selections, replay behavior, and xAPI events — to produce stronger evidence of understanding.

- Interactive video is a format that embeds structured learner actions inside the video itself, producing behavioral data beyond passive watch time.

- xAPI is a learning data standard that can capture timestamped event-level activity across multiple platforms and devices when the video experience is configured to send those events to a Learning Record Store.

- SCORM is most commonly used for LMS summary reporting: completion status, score, pass/fail, and time spent.

- Predictive learning analytics uses early learner behavior signals to flag risk patterns before the final failure point — when enough behavioral data exists.

Introduction

Many training teams still operate on a flawed assumption: produce more videos, and learning improves.

It sounds reasonable. But production volume alone does not prove learning.

AI is already embedded in L&D production workflows. Synthesia's 2026 AI in Learning and Development Report, drawing on data from 421 professionals, found that 87% of respondents already use AI — with common uses including voice generation, content and quiz drafting, video creation, and translation. 52% use AI specifically for video creation, and 84% cite faster production as a top benefit.

That progress is real. Training teams genuinely need speed. But faster production creates a second problem: teams can now publish more training content than they can evaluate. More videos mean more opportunities for learners to press play, leave the tab open, skip the difficult section, miss the key concept, and still appear "complete" in a basic reporting system.

The measurement gap is visible in LinkedIn's 2024 Workplace Learning Report: fewer than 5% of large-scale upskilling programs had advanced far enough to measure success — with measurement stage figures sitting at 5% in 2022 and 4% in 2024.

This article explains the measurement gap created by passive AI video, then shows how interactive video, SCORM, xAPI, branching, and learner analytics give training teams stronger evidence of understanding.

See how Clixie turns passive video into interactive training with quizzes, branching, SCORM, xAPI, and learner analytics.

Passive AI Video vs. Interactive AI Video with Analytics

AI Video Solved the Production Bottleneck

AI video production is a compressed workflow that replaces weeks of studio scheduling, voiceover recording, editing, and localization with automated generation — cutting what used to take months down to days.

Before AI video, even a small training update could trigger a full production chain: script, narration, screen capture, editing, captions, LMS upload, review, and localization. Every step required a different person and tool. A single product change could touch the entire chain.

AI compresses that chain. But compression creates a second problem: teams can now publish more training content than they can evaluate.

Synthesia's 2026 report found that the top two benefits of AI in L&D are faster production (84%) and a better learner experience (66%). That combination is compelling — but only if the team has a system for measuring whether the learner experience is actually producing learning, not just content.

The New Bottleneck Is Proof of Learning

Training evidence is the data trail that shows whether a learner paid attention, made decisions, answered correctly, recovered from confusion, and completed the required outcome — not whether the video file reached the end.

LinkedIn's 2024 Workplace Learning Report found that fewer than 5% of large-scale upskilling programs had advanced far enough to measure success in either 2022 or 2024. Most teams still rely on views, watch time, completion percentage, and drop-off points.

That data is useful for content analytics. It is insufficient for training. It does not show whether the learner understood the content, which decision path they chose, which quiz question exposed a knowledge gap, or whether a learner paused repeatedly at a confusing section.

Field Note: The Illusion of Completion

In my work evaluating legacy training programs, the "aha" moment for most training directors happens during our first data audit. Recently, we analyzed passive video data for the BolaWrap Training Videos. The LMS dashboard proudly displayed an 88% "completion rate" for a mandatory 12-minute field deployment protocol video. However, when we looked at the actual engagement metrics, the average watch time was just 2 minutes and 15 seconds. Trainees were hitting play, minimizing the tab, and letting the video run in the background just to trigger the LMS completion flag. The department thought their personnel were fully trained and compliant; the data proved they were just checking a box.

Individual learner data was anonymized to protect privacy.

Understanding what training engagement actually means starts with what learners choose to do inside the content — not whether the video file finished running.

Passive AI Video Still Has the Same Old Problem

Passive AI video is a generated training asset that plays from beginning to end without requiring the learner to make a decision, answer a question, or demonstrate understanding — producing the same shallow completion data as any traditional video, regardless of how fast or cheaply it was made.

A passive training video can show 90% completion and still fail as a learning tool. A 12-minute compliance video might be marked complete after playback finishes, even if the learner watched only the first two minutes, left the tab open, skipped the policy example, and failed the follow-up question. The completion record says "done." The learning evidence says "not yet."

Moodle's workplace training data found that 46% of employees speed up training videos or let them play while multitasking, while 14% mute their laptops or click through questions without actually participating. Completion records do not capture any of that.

Field Note: Watched Does Not Mean Learned

I see this disconnect between completion and comprehension constantly. A recent example involved Blue River Financial Services rolling out a high-stakes data privacy module. One of their branch managers "completed" the 20-minute passive video course, logging the full watch time without skipping. But two days later, that same manager failed a simulated phishing assessment, scoring just 40% on the exact concepts covered in the video. Because the video lacked interaction, the L&D team had no idea she had tuned out during the critical section on email verification. The completion checkmark gave them a false sense of security right up until she failed the practical application.

Individual learner data was anonymized to protect privacy.

Passive video is a poor fit for high-stakes training: compliance modules, product onboarding, sales certification, healthcare education, scenario-based judgment calls, and any module where downstream behavior matters. These use cases need interaction — moments where the learner has to choose, answer, confirm, or apply the concept. That interaction is not just pedagogically better. It is where measurable data is created.

Interactive Video Turns Training Into Measurable Learning

Interactive video is a training format that embeds structured learner actions — quizzes, branches, hotspots, and forms — directly inside the video experience, creating behavioral data at every decision point instead of a single completion record at the end.

The evidence for active learning formats is consistent. Engageli's active learning study found that its highest-engagement condition produced 13 times more learner talk time, 16 times more nonverbal engagement, and test scores 54% higher than traditional lecture methods. That is not a claim that every interactive video will outperform every passive video. It is evidence of the underlying principle: participation produces stronger learning signals than passive consumption.

The interactions that generate measurable data include:

- Knowledge checks and quizzes

- Branching scenarios and decision paths

- Clickable hotspots and resource cards

- Polls and surveys

- In-video forms and data capture

- Completion gates that require a response to continue

- Bookmarks and chapter navigation

- Language switching

- In-video feedback prompts

- Calls to action

Most passive video systems tell you whether a learner opened, watched, or completed the video. Interactive video can show what they did along the way — which branch they chose, where they failed, what they replayed, and whether they recovered. That is the difference between a completion record and a diagnostic trail.

What Clixie Can Track Inside an Interactive Training Video

Depending on how the experience is configured, Clixie can help teams track:

- Video starts and completions

- Chapter-level engagement

- Pauses and replays

- Quiz attempts and scores

- Question-level responses

- Branch selections

- Hotspot clicks

- Resource opens

- Form submissions

- Language selection

- SCORM completion and score

- xAPI event activity

This is not a complete list of what is possible across every setup — it depends on how the experience is built and what the LMS or LRS is configured to receive. But it illustrates the gap between a platform that hosts video and a platform that instruments the learning experience.

SCORM Tells You the Summary. xAPI Tells You the Story.

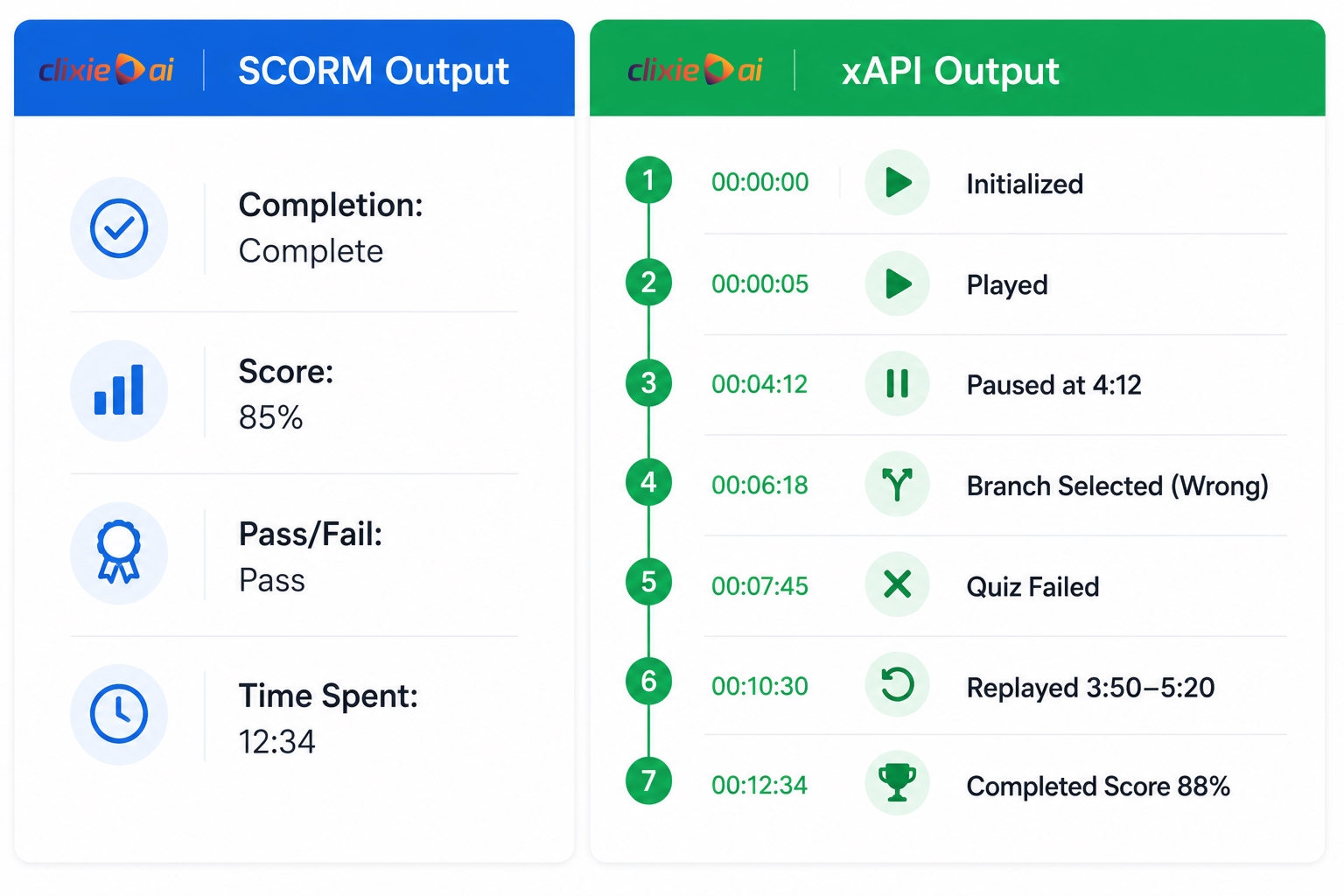

SCORM is most commonly used for LMS summary reporting — completion status, score, pass/fail, and time spent — while xAPI can capture a timestamped event trail of configured learner actions across the full experience, stored in a Learning Record Store outside the LMS.

As iSpring's comparison guide notes, SCORM supports compatibility and basic learner tracking in an LMS, while xAPI captures more detailed experiences across platforms and devices and stores data in an LRS. SCORM remains common because it works reliably with most LMS setups. Some SCORM 2004 implementations can store additional interaction data, but SCORM was not designed for the same cross-platform event stream that xAPI supports.

xAPI — also called the Experience API or Tin Can API — uses a subject-verb-object statement format to capture learning activity in more detail. What those statements include depends on how the platform and experience are configured.

xAPI does not create useful analytics by itself. The platform must decide which events to send, how to name them, and where to store them.

A typical SCORM report might say: "The learner completed the module and scored 85%."

A properly instrumented xAPI setup can record: "The learner paused at 4:12, selected Branch B, answered Question 2 incorrectly, replayed the segment from 3:50 to 5:20, then completed the module with a score of 88%."

One is a record. The other is a trail — and it is the trail that tells you what to fix. Learn more about SCORM video training integration and how it fits into an LMS workflow.

When SCORM Is Enough and When xAPI Is Better

SCORM is usually enough when the training requirement is straightforward: launch a course in an LMS, record completion, send a score, and mark pass or fail.

xAPI is better when the team needs to understand behavior inside the experience — what learners clicked, where they paused, which branch they selected, which resource they opened, and which interaction patterns preceded failure or success.

Both can be used at the same time. Many organizations use SCORM to send a clean completion record to the LMS while also using xAPI to build a richer behavioral data set in an LRS. That gives the compliance team the record they need and the L&D team the diagnostic trail they need.

Use both when the LMS needs clean completion records and the learning team needs behavioral analytics.

The Real Value Is Prediction, Not Just Reporting

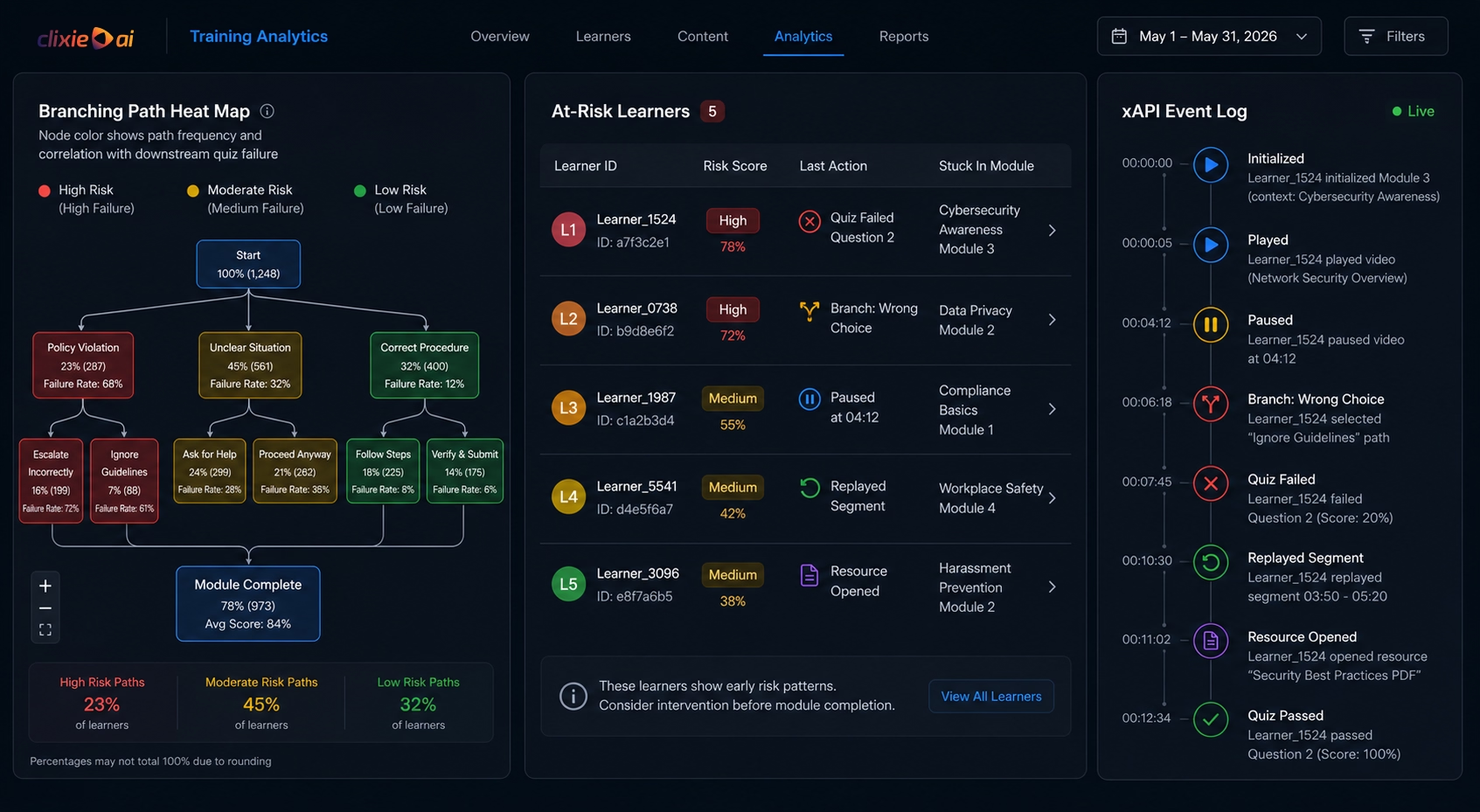

Predictive learning analytics is an approach that analyzes early learner behavior signals — repeated pauses, wrong branch selections, failed quiz attempts — to flag risk patterns before the final failure point, when enough behavioral data exists and the experience is configured to capture it.

Reporting tells you what happened. Prediction tells you what is about to happen — while there is still time to act. Most training teams discover problems at the end of a course or at a compliance deadline. Prediction changes that timeline.

Prediction quality depends on data volume, event quality, historical patterns, and how the risk model is configured. A dashboard cannot predict what it does not measure. But when an experience is well-instrumented, early patterns become readable.

In interactive video, early risk signals can appear well before the final score. A learner who pauses twice in the same section, selects the lower-performing branch, fails the next quiz, and replays the explanation is not just "engaged." They may be struggling. That pattern gives the training team a chance to clarify the section, add a resource, or trigger follow-up before the learner fails the course.

Field Note: Intervening Before the Drop-Off

A clear example of predictive analytics in action came from TechInnovate IT Company using Clixie for their technical support onboarding. During week one, our xAPI tracking flagged a critical signal: 65% of a new hire cohort selected the exact same incorrect branch in a simulated network escalation scenario. Because this data surfaced immediately — while the learners were still active in the module — the training manager paused the scheduled curriculum and deployed a targeted 15-minute clarification session on that specific escalation protocol. By addressing the gap early, rather than waiting for the 30-day performance review, TechInnovate reduced repeat onboarding support tickets by 42% over the next quarter.

Individual learner data was anonymized to protect privacy.

What Training Teams Should Measure Instead of Views

Training measurement is the systematic tracking of engagement quality, comprehension signals, decision behavior, risk indicators, and business outcomes that proves a video produced learning — not just playback — and identifies exactly where to improve.

Stop at views and you have content analytics. Start at behavior and you have learning intelligence.

Engagement quality:

- Starts

- Completions

- Replays

- Pauses

- Drop-off points

- Chapter-level engagement

Comprehension:

- Quiz attempts

- Correct and incorrect answers

- Score distribution

- Question-level failure rates

- Retake behavior

Decision behavior:

- Branch selections

- Scenario choices

- Hotspot clicks

- Resource opens

- Form submissions

Risk indicators:

- Repeated pauses at the same timestamp

- Consecutive quiz failures

- Early exits

- Low interaction rate

- Missed checkpoints

Business outcomes:

- Certification completion

- Compliance readiness

- Sales readiness

- Customer onboarding progress

- Support ticket reduction

- Time to competency

This is where interactive video analytics connects to outcomes the business already measures. Video engagement data is most useful when combined with other measures of effectiveness — performance, sales, efficiency, or cost reduction — not treated as a standalone proof of learning.

From Static Training Videos to Updateable Learning Systems

The strongest use case for AI video is not one great video. It is a repeatable system that improves as it runs.

The point is not to create more content. The point is to shorten the loop between learner behavior and content improvement.

In practice, that means training can be updated, localized, measured, and improved without rebuilding the entire asset. Traditional video is a finished asset — you produce it, publish it, and replace it when it goes stale. A well-instrumented interactive video is different. It is a living tool that gets better with each cohort.

That system works like this:

- Create or upload a training video — recorded, AI-generated, or a repurposed live session

- Use AI to auto-generate chapters, captions, quizzes, and a transcript

- Add interactions: branches, hotspots, forms, and calls to action

- Publish to an LMS, website, email sequence, or internal portal

- Track learner behavior through SCORM completion data and xAPI event activity

- Identify confusion points, drop-off patterns, and at-risk signals

- Update the underperforming segment — not the entire video

- Republish without rebuilding from scratch

That loop is what separates a content library from an evidence loop. Branching video software built on this model does more than deliver training — it generates the data that improves training. And employee onboarding videos built this way get measurably better over time instead of sitting in an LMS folder growing stale.

What Video Analytics Cannot Prove by Itself

Video analytics shows behavior inside the learning experience. It cannot prove job performance on its own, and making that claim weakens the measurement case rather than strengthening it.

A learner can pass every quiz and still fail to apply the skill in the field. That is why the strongest learning measurement connects video analytics with other signals: manager observation, CRM data, support ticket volume, compliance audit results, certifications, or on-the-job performance metrics.

Interactive video gives training teams better evidence than passive video. It is not the entire measurement system. The goal is not to replace all other evaluation methods — it is to replace the weakest one: completion records that prove nothing except that a file finished playing.

Conclusion

AI has made training video production faster. That is valuable, but it is no longer the differentiator.

The real question is whether the video produced stronger evidence of learning. Did the learner understand the concept? Did they choose the right scenario path? Did they recover after a wrong answer? Did they complete the module because they learned, or because the video reached the end?

Passive video cannot answer those questions well. Interactive video can.

With quizzes, branching, hotspots, captions, SCORM, xAPI, and AI video localization, training teams can move from content production to learning proof. That is where AI video becomes more than a faster asset. It becomes a measurable training system.

Turn passive training videos into measurable learning experiences.

Use Clixie to add quizzes, branching, hotspots, captions, localization, SCORM/xAPI tracking, and learner analytics to your videos.

Create an Interactive Video · Watch Demo

FAQ

What is AI training video analytics?

AI training video analytics is the measurement of how learners interact with AI-generated or AI-assisted training videos. It can include completions, quiz attempts, branch selections, replays, pauses, hotspot clicks, resource opens, SCORM data, and xAPI events, depending on how the video experience is configured.

Why are views not enough for training videos?

Views only show that a video was opened or played. They do not prove that the learner understood the content, answered correctly, made the right decision, or applied the concept. Moodle's workplace training data found that 46% of employees speed up videos or let them play while multitasking, which means completion records alone can significantly overstate actual engagement.

What is the difference between SCORM and xAPI for video training?

SCORM is most commonly used to send completion, score, pass/fail, and time data to an LMS. xAPI can capture more granular event data — such as plays, pauses, branch selections, quiz attempts, and resource clicks — when the learning experience is configured to send those events to a Learning Record Store.

Can interactive video work with an LMS?

Yes. Interactive video can work with an LMS when it is published, embedded, or packaged in a compatible format. SCORM is often used for LMS completion and score reporting, while xAPI can provide richer event-level analytics in a separate LRS. Many teams use both at the same time.

How does interactive video improve learning measurement?

Interactive video creates measurable learner actions inside the video experience. Quizzes, branches, hotspots, forms, and resource clicks help training teams see where learners engage, struggle, recover, and complete required outcomes — not just whether the video file played to the end.

.png)

.png)