How AI Music Video Generators Are Changing Visual Storytelling for Independent Artists

Stop spending thousands on music videos. Discover how AI tools like Freebeat empower independent artists to create pro-level, cinematic visuals without editing skills.

Why AI Video Generation is the New Standard for Independent Music Promotion

For most independent artistsa professionally produced music video has always been out of reach. Not because of a lack of creative vision, but because realizing that vision required a director, a camera operator, post-production expertise, and a budget that most self-funded releases cannot justify. The recording gap between independent and signed artists has largely closed thanks to affordable home studio tools. The video gap has persisted.

AI music video generation is changing that in a meaningful way. The tools available today don't just produce adequate visual content quickly — they enable cinematic storytelling, beat-synced editing, stable character performance, and multi-platform distribution from a single workflow, with no editing skills required. This article examines what those tools are actually doing, why it matters for independent artists, and what separates current-generation platforms from the template-based tools that came before.

Why Templates and DIY Editing Both Failed Independent Artists

Earlier automated video tools offered speed at the cost of creative coherence. Template-based generators laid pre-built animation loops over uploaded audio — fast to produce, but visually disconnected from the music itself. A slow, atmospheric ballad and a high-energy club track would produce near-identical output if run through the same template. The visuals didn't follow the song's rhythm, reflect its emotional storyline, or carry any concept-driven visual logic. For artists whose identity depends on mood-based visuals that actually mean something in relation to their music, this was a placeholder, not a solution.

The alternative — learning professional editing software — is a legitimate path but a long one. Cinematic shot composition, the logic of A-roll versus B-roll, knowing when a close-up serves the viewer better than a wide shot: these are skills that editors and directors develop over years. The deeper irony is that many independent artists already have sophisticated creative instincts about pacing, emotional arc, and visual tone. What they lack is not creative judgment — it is the technical vocabulary to translate that judgment into a film-style music video.

Audio-Reactive, Structure-Aware Video Generation

Reading the Song as a Blueprint

The defining technological advance in current-generation tools is genuine audio reactivity. Rather than treating the audio as a static background track, platforms like Freebeat, a dedicated AI music video generator, treat the music as a blueprint for the entire visual production.

The system analyzes a track's full architecture before a single frame is generated. This analysis includes:

- Mapping BPM and individual beats: Ensuring every cut is mathematically aligned with the rhythm.

- Bar Groupings: Identifying patterns in music to maintain visual consistency within specific phrases.

- Song Structure: Distinguishing between the intro, verses, choruses, and the outro to adjust visual intensity.

This structural analysis then drives every visual decision in the output. Beat drops trigger specific edit cuts, while chorus sections might shift to higher-energy, dynamic editing sequences. Conversely, quieter passages allow the "camera" to settle into slow, atmospheric moments with depth and shadows. In this model, the video is built around the song, not placed on top of it.

Beyond simple reactivity, the platform applies "director-level" shot logic. It intelligently utilizes A-roll for primary performance footage to anchor the viewer's attention and B-roll for environmental and atmospheric context. It even identifies moments of musical intensity to insert detail shots—such as hands on an instrument or microphone close-ups—that, that reward the viewer's attention. The resulting video reads as intentionally planned because, at its core, it is structurally synchronized with the audio.

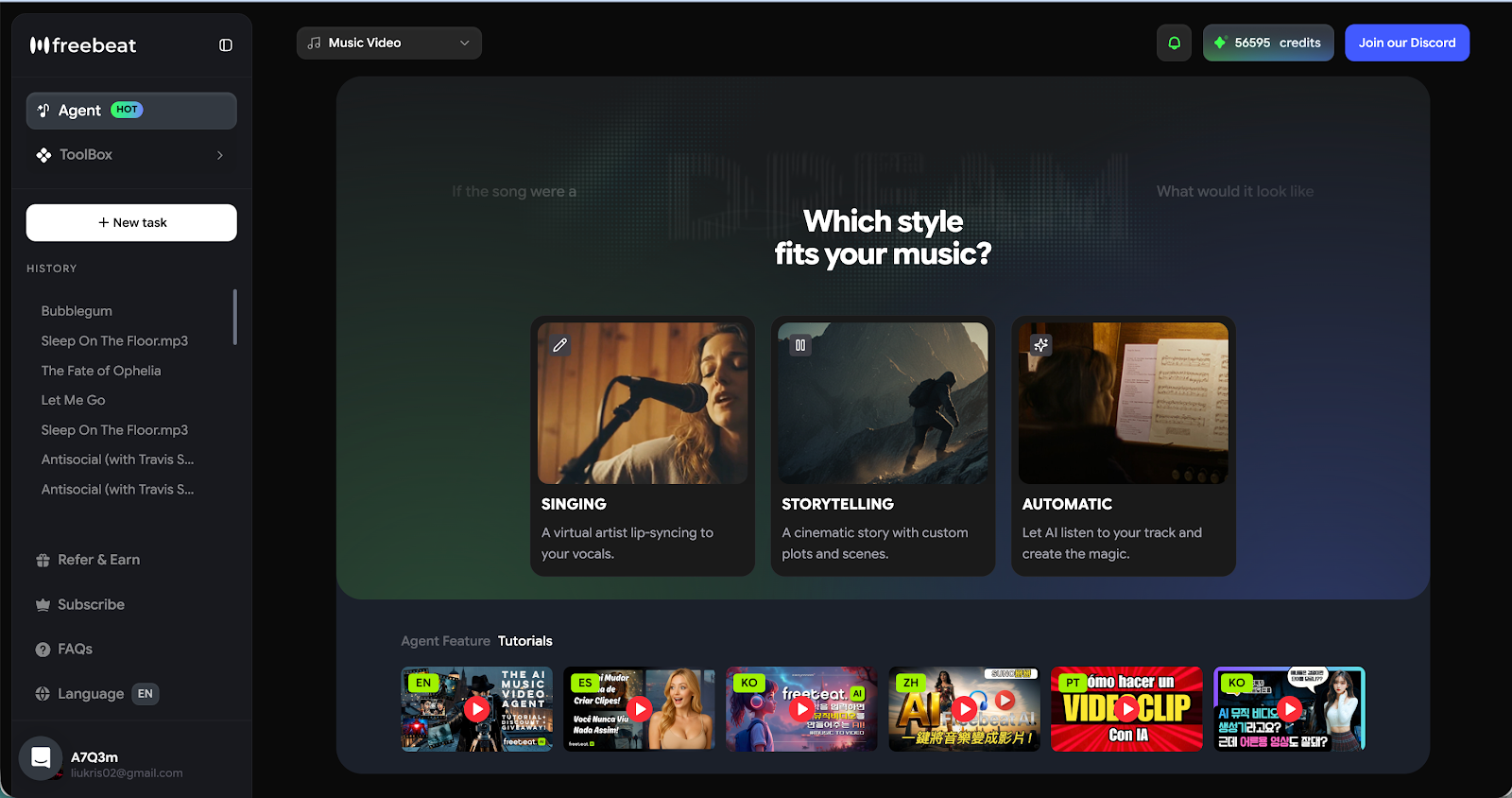

Three Modes for Three Different Creative Goals

To accommodate different artistic visions, the workflow is categorized into three distinct modes:

- Storytelling Mode: Built for narrative-driven music videos where the visual sequence follows a genuine emotional arc that complements the lyrical themes.

- Stage Performance Mode: Replicates the high-energy feel of a live concert, featuring dramatic lighting, stage smoke, and close-up performance shots for DJs and electronic producers.

- Automatic Mode: A "single-click" solution where the AI analyzes the track and selects the best visual approach autonomously, requiring no manual preprocessing or learning curve.

This frictionless workflow is supported by high input flexibility. Creators can upload MP3, WAV, or MP4 files, or even paste direct links from platforms like Suno, Udio, TikTok, YouTube, or SoundCloud to move immediately from audio creation to visual production.

Visual Style, Character Consistency, and Lip Sync

Style as Creative Identity

Visual style is a core component of an artist's brand language. Modern AI tools treat style with the same importance as the music itself, supporting aesthetics ranging from cinematic realism and anime to cyberpunk, neon noir, and fantasy illustration. Through natural-language prompts, creators can control color tones, mood, and atmospheric lighting to ensure the visual register matches the emotional register of the music.

For artists who have a general concept but lack the technical vocabulary to describe it, AI-assisted prompt expansion helps refine the creative direction without requiring design expertise.

Character Consistency and Lip Sync Accuracy

Historically, two technical problems have undermined the credibility of AI-generated videos: character consistency and lip sync accuracy.

Character consistency is vital because when a performer's appearance shifts between shots, the audience registers a sense of "discontinuity" that feels unnatural, even if they cannot name it. Freebeat addresses this by utilizing custom AI avatars built from uploaded images or a preset character library, maintaining stable facial clarity and identity across every scene transition.

Lip sync accuracy is the second major hurdle. A music video where the mouth movements do not match the vocals immediately collapses the credibility of the entire performance. Current models now achieve over 90% lip sync accuracy, keeping mouth movements naturally aligned with the vocals for the full duration of a track—the essential threshold that separates a professional video from one that reads as artificial.

All-in-One Studio: From Lyrics to Platform Export

A complete release strategy in 2026 requires more than just a single music video; it requires a suite of visual assets. Freebeat functions as an all-in-one multimodal studio, integrating the industry's leading models—including PixVerse, Veo, Kling, and Wan—into a single workspace.

The platform handles several critical release tasks:

- Lyrics Video Generation: Provides full control over fonts, timing (karaoke-style), and motion effects, exportable as video or .lrc files.

- Streaming Visuals: Generates looping animated album covers for Spotify Canvas and Apple Music.

- Multi-Platform Export: With one click, creators can export 16:9 for YouTube, 9:16 for TikTok/Reels, and 1:1 for Instagram feeds, with platform-specific framing built-in.

- AI-Generated Music: For creators who do not have an original track, the tool can generate custom music to match the desired visual tone.

Significantly, all content is designed for safe publishing, meaning no watermarks, no copyright exposure, and creator-safe assets ready for immediate distribution across every major platform.

What Engagement-First Design Teaches Us Across Video Formats

The capabilities described above — audio-reactive editing, intentional shot logic, synchronized lyrics, platform-optimized export — reflect a coherent design philosophy: video that is engineered around the viewer's engagement rather than assembled for the creator's convenience. Every visual decision is tied to a structural principle, whether that's the beat of the music, the emotional arc of the narrative, or the technical requirements of the distribution platform.

This philosophy appears across very different video contexts. Platforms like Clixie AI apply the same engagement-first thinking to educational and corporate training content — layering interactive elements such as in-video quizzes, branching learning pathways, and clickable hotspots directly into video to transform passive viewers into active participants. The formats are different, but the underlying insight is shared: passive delivery is a fragile relationship between content and audience. Structuring video around the viewer's cognitive engagement — whether through audio-reactive cuts or interactive learning sequences — produces measurably better retention and attention outcomes.

For independent artists, the practical implication is that AI generation tools don't replace creative vision — they execute it at a production quality that was previously inaccessible. The artist still needs to know what emotional world their music lives in, who their audience is, and what a video that serves both looks like. What AI removes is the requirement to also be a director, an editor, and a post-production specialist to translate that vision into a finished, shareable video.

The Production Gap Is Closing — For Artists Who Use the Right Tools

The structural inequality between artists with production budgets and those without has been one of the more persistent features of the independent music landscape. AI music video generation is not eliminating that gap overnight, but it is narrowing it in ways that matter: cinematic results, stable character performance, accurate lip sync, story-driven visual content, and multi-platform distribution — all within a single workflow, with no editing skills needed and no learning curve beyond understanding which creative mode serves the project.

For singer-songwriters, content creators, and solo artists building a visual identity without a production budget, the tools available now produce output that would have been difficult to commission professionally only a few years ago. The question is no longer whether the quality is there. It is whether the artist's creative vision is clear enough to give the tool something meaningful to execute.

.png)

.png)